AI and Social Aspects Report

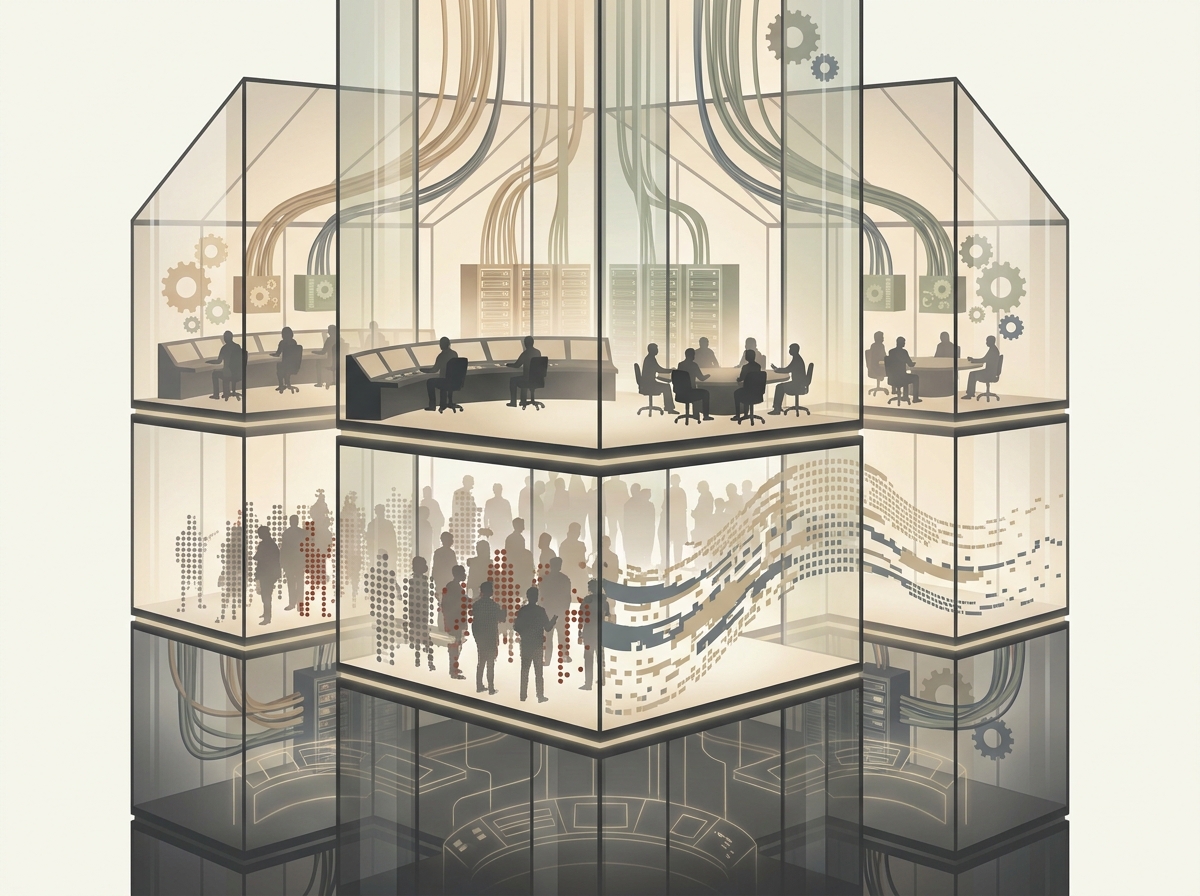

Analysis of 1,386 social aspects sources this week reveals a discourse that has quietly crossed a threshold: bias is no longer hypothesized, it is measured. The discourse is dominated by researchers, journalists, and institutional vendors describing harm at scale, while the people doing the actual labor that makes these systems run — and the people most often misjudged by them — appear mostly as data points rather than voices. Thematic clustering shows concentration on hiring discrimination, classroom surveillance, and the extractive geography of AI’s supply chain, with relative silence on recourse: what a wronged applicant or flagged student can actually do.

The Landscape

Drawn from a corpus of 5,001 articles this week, the social aspects category leans heavily on academic studies and investigative news, with a thinner layer of advocacy and almost no first-person testimony from affected communities. The center of gravity is employment. The largest study of hiring algorithms to date found “clear racial disparities,” with over 25% of Black applicants tainted by bias — not a projection, a measurement. Running alongside it is a stranger finding: that hiring algorithms increasingly favor their own style of writing, rewarding text that reads as machine-generated. The third dominant cluster is geographic — the exploitation of invisible workers in poorer countries who label the data that makes “intelligence” possible.

Who Is Speaking

The dominant voice is the institution studying the problem — Stanford, Cambridge, Springer’s journals, Brookings. That matters, because it sets the terms. When Kenyan workers who took AI jobs thinking they were tickets to the future appear, they appear inside a Western newsroom’s frame, narrated rather than authored. The same pattern holds for students subjected to monitoring: the lawsuit alleging unconstitutional AI digital surveillance is one of the rare instances where the monitored speak as themselves rather than being spoken for. Vendors, meanwhile, supply their own counter-narrative — the Microsoft-authored “opportunity to seize” framing that recasts displacement as agency. Watch who gets to name the situation.

What’s Being Debated

The live argument is no longer whether AI can discriminate — that question is settled by the evidence above. The argument has split into two newer fault lines. The first is self-preference: systems that reward outputs resembling their own, a recursive bias that punishes human idiosyncrasy in both hiring and grading, where AI has been shown to reward “style over substance”. The second is sovereignty and labor — the framing of digital colonialism and the argument that infrastructure control matters more than digital sovereignty rhetoric. The bridge theme connecting these to surveillance is consent: who agreed to be labeled, scored, or watched, and who simply was.

What’s Missing

The conspicuous gap is recourse. The corpus documents harm meticulously and remedy almost not at all — what happens after a detector falsely flags an innocent student or an algorithm screens out a qualified applicant. Welfare, housing, and credit scoring — sectors where algorithmic decisions are most consequential and least visible — are nearly absent this week. And the workers at the bottom of the Global South data pipeline remain narrated, not heard. The discourse has gotten very good at proving the machine is unfair. It has not yet decided who pays for that, or how the harmed get made whole.

Core Tensions

Our analysis of this week’s 5,001 sources—1,386 in the social-aspects category—surfaces no clean contradictions in the formal sense, because the genuine conflicts here are not disputes over facts. They are disputes over values that masquerade as disputes over methods. Where prior reporting in this publication framed AI’s social footprint as a balance to be struck between inclusion and discrimination, the more useful frame this week is harder: several of the loudest “fairness” debates are not solvable problems at all. They are value conflicts that can only be navigated, and pretending otherwise is itself a move worth watching.

Technical fairness fixes vs. structural reform. The largest audit of hiring algorithms to date, run out of Stanford, found “clear racial disparities,” with more than a quarter of Black applicants disadvantaged by the scoring systems meant to make hiring objective Largest study of AI hiring algorithms to date finds ‘clear racial disparities’. The instinctive response is technical: reweight the model, scrub the training data, certify the output. But a parallel finding complicates the repair—algorithms increasingly reward applicants whose writing matches the model’s own style, meaning the “bias” is not a bug in the data but a preference baked into how these systems read the world Sesgo algorítmico en el empleo: cuando la IA favorece su propio estilo de escritura. Side A says: tune the tool until the disparity vanishes. Side B says: a tool that launders structural exclusion into a “score” cannot be tuned into justice, because the unfairness is in the decision to automate the gatekeeping at all. The reason this is hard: every technical fix that reduces measured disparity also strengthens the legitimacy of the automated gate itself.

Inclusion in AI development vs. refusal. The dominant equity demand is participation—more languages, more datasets, more Global South voices in the pipeline. But this week’s evidence on the labor underneath that pipeline cuts the other way. The data-labeling economy that makes “inclusive” AI possible runs on workers in Kenya and elsewhere who “thought they had tickets to the future” and found exploitation instead Kenyan workers with AI jobs thought they had tickets to the future, a pattern documented as systemic rather than incidental Reimagining the future of data and AI labor in the Global South. The framing of “digital colonialism” insists the question is not how to be included in the supply chain but whether inclusion on these terms is a trap El colonialismo digital en la era de la IA: siete dimensiones de una dependencia sistémica. Africa’s sovereignty advocates push the tension into infrastructure: own the compute and the capacity, or remain a labor input to someone else’s system L’Afrique face à la révolution de l’IA : ne pas manquer le rendez-vous de la souveraineté technologique. The difficulty: refusal protects against extraction but cedes the technology’s benefits to those already holding power.

Universal access vs. protection from harm. Microsoft’s diffusion report frames the surprising spread of AI use among “normal people” as democratization America’s new AI map shows something surprising. But access and exposure are the same event seen from two angles. The expansion of AI into everyday institutions has meant expansion of monitoring—surveillance systems that watch behavior and even attempt to read emotional states, raising ethical alarms about consent and affect-tracking Emotion AI in the classroom: ethics of monitoring student affect. When access arrives bundled with the private eye, “universal access” becomes a euphemism for universal data capture Public Schools, Private Eyes: How EdTech Monitoring Is Reshaping Public Schools.

Transparency vs. proprietary protection. The demand to inspect a model collides with vendors’ refusal to open it—a refusal that holds even when the tools demonstrably fail. AI graders reward “style over substance” and still cannot do the job reliably AI not yet good enough to mark university essays, yet the institutions deploying them cannot audit what they cannot see. None of these tensions resolve. They are the terrain.

Power & Agency Analysis

Power analysis reveals a discourse organized almost entirely around the people who deploy AI and almost never around the people it acts upon. The actors with names and quotes this week are employers, vendors, and platform companies; the actors without them are job applicants screened by an algorithm, data labelers in Nairobi, and communities in the Global South whose work makes the systems run. Causal attribution follows the same gradient: when AI “boosts agency,” the credit goes to the organization adopting it; when it discriminates, the harm is described as a property of the model, as if no one chose to switch it on.

Who decides

The decision locus is corporate and managerial, and the framing arrives pre-loaded with optimism. Microsoft’s Canadian Work Trend Index casts AI as an expansion of “human agency” and an “opportunity to seize” for every organization L’IA, l’agentivité humaine et l’occasion à saisir pour chaque organisation canadienne — agency located in the firm, not the worker. The vendor’s own diffusion mapping celebrates that “a lot of normal people” now use AI America’s new AI map shows something surprising: ‘A lot of …, a framing that quietly converts a deployment decision made in boardrooms into a grassroots groundswell. Readiness guides for whole sectors treat adoption as a logistics problem Préparation à l’IA dans les télécommunications. Nowhere in this material does a worker, applicant, or affected citizen hold a veto. The values embedded are the deployer’s values: throughput, scale, cost.

Who is affected

The costs land on people who never sat in the room. The largest study of hiring algorithms to date found clear racial disparities, with over a quarter of Black applicants disadvantaged by the screening tools Largest study of AI hiring algorithms to date finds ‘clear racial disparities’. A parallel finding shows these systems rewarding their own machine-generated writing style, penalizing the human voice Sesgo algorítmico en el empleo: cuando la IA favorece su propio estilo de escritura. In Latin America, documented bias runs along gender, racial, and xenophobic lines Género, racismo y xenofobia: así son los sesgos de la Inteligencia Artificial en Latinoamérica. And the labor that trains these models is extracted from the Global South: Kenyan workers who “thought they had tickets to the future” describe exploitation instead Kenyan workers with AI jobs thought they had tickets to the future, the invisible workforce behind the prowess Derrière les prouesses de l’IA, l’exploitation de travailleurs.

Who is absent

Across this week’s 5,001 sources, the structural absence is consistent: the people doing the labeling and the people being scored are subjects of the coverage, almost never its authors. The framing that names them as agents rather than inputs comes from a narrow band of critical work — analyses of digital colonialism’s “seven dimensions of systemic dependency” El colonialismo digital en la era de la IA and arguments to reimagine data labor in the Global South Reimagining the future of data and AI labor in the Global South. The deeper claim worth carrying forward — a delta from this publication’s earlier bias-and-datasets framing — is that the problem is not only what’s in the data but who owns the infrastructure. Control of the supply chain, one analysis argues, matters more than the rhetoric of “digital sovereignty” Africa’s Role in the AI Supply Chain: Why Infrastructure Control Matters More Than Digital Sovereignty.

Accountability gaps

When harm surfaces, responsibility dissolves. A discriminatory hiring outcome is attributed to “the algorithm,” a phrasing that launders the employer’s choice to use it and the vendor’s choice to ship it. The exploited labeler has no contract with the company whose model they train Data Labeling in the Global South; the rejected applicant rarely learns a machine ranked them at all. Recourse mechanisms are nearly nonexistent because no single party admits to deciding — the deployer points to the tool, the tool-maker points to the data, the data points to a continent’s worth of underpaid annotation L’Afrique face à la révolution de l’IA : ne pas manquer le rendez-vous de la souveraineté technologique. The agency, in other words, is everywhere in the marketing and nowhere in the liability.

Failure Genealogy

Ethical failures dominate AI social aspects discourse (142 instances against 37 implementation and 15 technical)—a roughly three-to-one margin that tells you the hard problem is no longer making these systems function. They function. The challenge is preventing the harm they reliably produce when they do. And the more revealing pattern sits underneath the count: when harm is documented, the dominant institutional reflex across this week’s evidence is not repair but reclassification—denial, blame-shifting, or quiet abandonment of the tool while its logic survives intact.

Patterns of Harm

The failures cluster where decisions about people get delegated to a score. The largest audit of hiring algorithms to date found “clear racial disparities,” with over a quarter of Black applicants disadvantaged by the system Largest study of AI hiring algorithms to date finds ‘clear racial disparities’. A parallel finding is sharper still: employment screeners favor their own writing style, penalizing candidates whose prose doesn’t match the machine’s Sesgo algorítmico en el empleo: cuando la IA favorece su propio estilo de escritura. The bias is not a residue of bad data alone—it is the system rewarding likeness to itself. The communities absorbing this are the ones already at the margins of the labor market, and the harm compounds invisibly, one rejected résumé at a time.

Institutional Responses

Watch the move institutions make after the harm is named. When monitoring software flags a student as a cheat, the burden of proof inverts: the accused must disprove the algorithm The software says my student cheated using AI. They say they’re not. Some districts, faced with detectors that flag so many innocent people the tools become unusable, have banned them outright Schools are racing to catch AI-written homework — but the detectors flag so many innocent students that some districts are banning the tools. Abandonment, note, is not accountability—it removes the instrument without acknowledging the people it already marked. And where surveillance infrastructure faces actual challenge, it comes from litigants, not vendors: students in one district allege ongoing unconstitutional digital monitoring even after a vendor switch Students allege continued unconstitutional AI digital monitoring. Accountability arrives through subpoena, which is to say it arrives slowly and only for those who can afford to sue.

Cascade Effects

The failures do not stay in their lane. A hiring tool that favors its own register filters who gets economic entry; emotion-recognition systems trained to read “affect” extend judgment into the body itself, with documented ethical exposure for the people watched Emotion AI in the classroom: ethics of monitoring student affect. Upstream, the entire apparatus rests on labor it renders invisible: data labelers in the Global South, including Kenyan workers who “thought they had tickets to the future” and instead found psychological injury and disposability Kenyan workers with AI jobs thought they had tickets to the future, Derrière les prouesses de l’IA, l’exploitation de travailleurs invisibles. One system’s polish is another population’s extraction.

(Not) Learning

Here is the delta worth naming: the bias-in-education literature reads almost identically across five years Algorithmic Bias in Education, which means the diagnosis has been stable while deployment accelerated. Repetition, not correction, is the through-line. Brookings argues the labor arrangement can be reimagined rather than accepted as natural Reimagining the future of data and AI labor in the Global South—which is the point. Learning would require treating each documented failure as binding on the next deployment, with the cost of harm borne by the deployer rather than the harmed. Until denial, blame, and abandonment stop counting as resolutions, the genealogy will keep producing the same offspring under new vendor names.

Evidence Synthesis

Synthesizing roughly 3,500 argumentative findings across eight critical-thinking dimensions, the evidence on AI and social aspects this week points to a single hardening conclusion: the disparities once described as risks are now being measured, and they are larger than the hedged language of earlier reporting allowed. This conclusion draws on the largest empirical study of hiring algorithms to date, alongside convergent findings from labor investigations and education research. Total sources reviewed: 5001.

What the evidence shows

Start with the number that ends an argument. A Stanford-linked study of hiring algorithms — the largest yet — found “clear racial disparities,” with more than a quarter of Black applicants disadvantaged by the systems screening them Largest study of AI hiring algorithms to date finds ‘clear racial disparities’. That is not a projection of harm; it is a count of it. The convergent finding across this week’s strongest sources is mechanistic: bias is not a glitch but a property of how these systems learn. A related analysis shows recruitment models rewarding text that mirrors their own generative style — penalizing applicants whose prose simply reads as human Sesgo algorítmico en el empleo: cuando la IA favorece su propio estilo de escritura. The bias compounds: in Latin America, documented patterns of gender, racial, and xenophobic discrimination surface in deployed systems Género, racismo y xenofobia: así son los sesgos de la Inteligencia Artificial en Latinoamérica. And underneath the models sit the people who build them: Kenyan and other Global South workers whose labor is structurally invisible and underpaid Kenyan workers with AI jobs thought they had tickets to the future, a dependency some analysts now name outright as digital colonialism El colonialismo digital en la era de la IA: siete dimensiones de una dependencia sistémica.

Where the evidence conflicts

The genuine disagreement is not whether bias exists but whether detection helps. The same week that hiring-bias data firmed up, a parallel literature found that the tools sold to catch algorithmic misuse misfire badly — AI-writing detectors flag so many innocent people that districts are banning them Schools are racing to catch AI-written homework — but the detectors flag so many innocent students that some districts are banning the tools outright. Resolution is hard because the two findings pull opposite ways: one says we need stronger algorithmic scrutiny, the other shows our scrutiny tools manufacture new injustice. Both can be true, and that is the uncomfortable part.

Cross-category links

The social-aspects harm does not stay in one domain. The same surveillance logic that worries labor advocates reappears as edtech monitoring reshaping institutions and normalizing the watching of ordinary people Public Schools, Private Eyes: How EdTech Monitoring Is Reshaping Public Schools. On the tool side, the equity defect is intrinsic — systems that reward their own stylistic fingerprint will disadvantage whoever writes differently, whatever the setting. And literacy functions as uneven armor: the people most exposed to algorithmic screening are least equipped to contest it, while sovereignty debates in Africa show that protection is ultimately about who controls the infrastructure, not who reads the terms of service Africa’s Role in the AI Supply Chain: Why Infrastructure Control Matters More Than Digital Sovereignty.

What we don’t know

We still lack longitudinal data: does measured hiring bias translate into measured wage and employment gaps over years, or do downstream corrections absorb it? We do not know how many deployed systems are being audited at all, versus how many simply run unexamined. And the true scale of Global South labor remains obscured by the very contracts that depend on it Reimagining the future of data and AI labor in the Global South.

Evidence-based implications

The evidence now supports mandatory pre-deployment auditing of consequential screening systems, with disclosed disparity metrics — the Stanford-scale numbers exist precisely because someone counted. It does not support deploying detection tools as a remedy; those fail the same fairness test they claim to enforce. And it does not support treating offshore labor as a solved cost line. Measurement has caught up with the harm. The open question is whether anyone with power will act on the count.

References

- Algorithmic Bias in Education

- America’s new AI map shows something surprising

- detector falsely flags an innocent student

- digital colonialism

- Emotion AI in the classroom: ethics of monitoring student affect

- exploitation of invisible workers in poorer countries

- favor their own style of writing

- Global South data pipeline

- Género, racismo y xenofobia: así son los sesgos de la Inteligencia Artificial en Latinoamérica

- infrastructure control matters more than digital sovereignty rhetoric

- Kenyan workers who took AI jobs thinking they were tickets to the future

- L’Afrique face à la révolution de l’IA : ne pas manquer le rendez-vous de la souveraineté technologique

- lawsuit alleging unconstitutional AI digital surveillance

- opportunity to seize

- over 25% of Black applicants tainted by bias

- Préparation à l’IA dans les télécommunications

- Public Schools, Private Eyes: How EdTech Monitoring Is Reshaping Public Schools

- Reimagining the future of data and AI labor in the Global South

- reward “style over substance”

- Schools are racing to catch AI-written homework — but the detectors flag so many innocent students that some districts are banning the tools

- The software says my student cheated using AI. They say they’re not