Toffler column

Through Toffler’s Lens

The Detection Arms Race

April 26, 2026 | 2461 words

Through Toffler’s Lens: The Detection Arms Race

A War Fought in the Wrong Century

Every week, somewhere in the bowels of an educational technology vendor, an engineer ships a model update. Turnitin’s AI detector gets a new classifier head. GPTZero retrains on fresh samples. Originality.ai posts a blog announcing improved accuracy against Claude 3.5. Within days — sometimes within hours — a Reddit thread documents the workaround. A “humanizer” tool ingests the flagged text, perturbs sentence rhythm, swaps a register, and pushes the output back below threshold. The detector vendor responds. The humanizer vendor responds to the response. Universities pay license fees to both sides of this exchange without quite registering that they are paying both sides.

Reported as a technology story, this looks like a normal cat-and-mouse cycle: spam versus spam filters, doping versus drug testing, malware versus antivirus. That framing is wrong, and the wrongness matters. Spam filters work because spam has a purpose distinguishable from legitimate mail. Drug tests work because the human body, absent intervention, does not produce synthetic testosterone. The detection arms race in writing has no such ground truth. Text is text. A sentence produced by a graduate student at 2 a.m. after four espressos and a sentence produced by GPT-4 prompted to imitate a tired graduate student are, at the level of tokens, not reliably distinguishable — and the gap is closing, not widening, with every model generation.

What Toffler’s framework allows us to see, and what the discourse around academic integrity stubbornly will not, is that this is not a technical problem awaiting a technical fix. It is a Second Wave literacy regime colliding with a Third Wave production reality. The collision generates heat — disciplinary hearings, anxious faculty meetings, vendor contracts, student panic — but the heat is a symptom of category failure, not of insufficient enforcement. The old regime assumed single authorship, fixed text, and originality-as-provenance. The new production reality is distributed authorship, fluid text, and originality-as-something-else-we-have-not-yet-named. No detector can resolve a category collision. Detectors can only price it.

This column will argue that the detection arms race is unwinnable not because the tools are bad but because the ontology underneath them has dissolved, and that AI Literacy — as a field, as a teaching practice, as a competency framework — is the only place where the dissolution can be honestly described rather than managed away.

The Second Wave Authorship Contract

To understand why the detection regime is collapsing, one has to see what it was built to protect. Toffler’s The Third Wave describes Second Wave civilization as organized around a tight cluster of standardizing principles: standardized goods, standardized time, standardized education, standardized work. Print literacy was the medium and the model. The mass-produced book required a mass-producible reader: someone trained to decode linear prose, to attribute single authorship, to honor the boundary between one mind’s text and another’s.

The authorship contract that emerged from this regime had three load-bearing assumptions. First, that a text has one author, or a small named set of authors, who own its propositions. Second, that the text, once produced, is fixed — that we can hold it up and ask, of any given sentence, whether it came from inside or outside the author. Third, that originality is a matter of provenance: the question “did you write this?” is meaningful and answerable, and the answer is morally consequential.

These three assumptions are the deep structure of every plagiarism policy, every citation manual, every Turnitin similarity score. They are also the deep structure of how faculty were taught to read student work — not as a literacy choice but as a professional reflex absorbed across decades. When a writing instructor reads a student paper and senses something is “off,” that intuition is trained on the Second Wave authorship contract. The instructor is not detecting machine text. The instructor is detecting deviation from a baseline of mass-produced student writing that the educational system itself manufactured.

This is the point Toffler makes most uncompromisingly across The Third Wave and (Future Shock): Second Wave institutions do not merely produce goods; they produce the categories by which the goods are evaluated. Standardized testing produces the standardized test-taker. Five-paragraph essay instruction produces the recognizable five-paragraph student voice. Detection tools are not neutral instruments scanning for objective machine signals. They are pattern matchers calibrated against a manufactured baseline of “what student writing sounds like” — and that baseline was never natural, never stable, and is now collapsing.

De-massification and the Vanishing Baseline

Toffler’s concept of de-massification, developed across The Third Wave and elaborated in Revolutionary Wealth, is doing the heaviest work in this analysis. The Second Wave produced mass audiences, mass markets, mass curricula. The Third Wave fragments them. De-massified media replaced three networks with millions of channels. De-massified work replaced the assembly line with the project team. De-massified production replaced standardized goods with configurable ones.

What detection vendors have not yet absorbed — what their entire business model depends on not absorbing — is that writing is now de-massifying. The baseline of “what a college sophomore’s argumentative essay sounds like” was already eroding before ChatGPT shipped. International students, first-generation students, neurodivergent writers, students fluent in registers acquired from social media, students who learned English from K-pop subtitles and Discord servers — the supposed monoculture of academic prose was always a fiction maintained by aggressive correction at the margins. Generative models have not destroyed a stable baseline. They have made the absence of a baseline impossible to ignore.

This is why detection accuracy claims do not survive contact with non-native English writers. The widely reported finding that GPT-detectors flag essays by non-native speakers at dramatically higher rates than native-speaker essays is not a calibration bug. It is the detector doing exactly what it was built to do: measure deviation from a manufactured norm. When the manufactured norm dissolves, the detector becomes a discrimination engine. The math has not failed. The category has.

A Second Wave reflex, confronted with a de-massifying signal, is to tighten enforcement of the norm. This is what we are watching. Institutions buy more detection licenses. Vendors stack more classifiers. Faculty are trained — in workshops that themselves cost real money — to recognize “AI tells”: em-dashes, the word “delve,” tripartite structures, hedged conclusions. Within a quarter, the models are retrained or the prompts are adjusted, and the tells migrate. The training was a sunk cost in obsolete pattern recognition. The literacy regime is asking faculty to chase a moving signal with a static instrument, and calling the resulting exhaustion “professional development.”

Across the AI Literacy corpus — 1,425 articles tracking exactly this transition out of a total of 6,636 — one of the most consistent findings is the stance gap between faculty and students. Faculty skepticism toward generative AI sits well above student skepticism; student early adoption sits well above faculty adoption. Read through the detection lens, this looks like a discipline problem: students are using the tools, faculty are policing the use. Read through the de-massification lens, it looks like something else entirely. Students are operating in the de-massified production reality where text is co-produced, fluid, and continuously revised across human-machine exchanges. Faculty are operating inside the residual Second Wave contract where text has a single source and the source is morally accountable. Both groups are behaving rationally inside their respective regimes. The institution is asking detectors to bridge a gap that detectors cannot bridge because the gap is ontological.

Future Shock at the Reading Desk

Toffler’s Future Shock named a specific kind of suffering: the disorientation produced when the rate of change outstrips the human capacity to assimilate it. The book’s central image is not the futurist’s victorious dashboard but the ordinary person, intelligent and competent, suddenly unable to use their training to navigate the present. Future shock is professional competence rendered obsolete faster than competence can be rebuilt.

The faculty member asked to adjudicate AI use in student writing is in a textbook future-shock condition. Their training — often a decade or more of graduate study, plus years of teaching — gave them a finely calibrated sense of how undergraduate prose develops, where it breaks, when it lifts. That sense was reliable across roughly a century of stable-enough authorship conditions. It became unreliable, in their classroom, in approximately eighteen months. The tools they are handed to compensate — Turnitin AI, GPTZero, Copyleaks — lag the threat by at least one model generation, and the vendors selling those tools quietly walked back accuracy claims after independent audits. (Turnitin itself revised downward its initial false-positive estimates within months of launch; OpenAI shut down its own AI classifier in July 2023, citing low accuracy. The company that made the model could not reliably detect the model’s output. This fact has not slowed the market for detectors made by companies with no such access.)

Future shock has a specific cognitive signature: the sufferer keeps applying the old tools harder, because the alternative is to admit that the tools no longer fit the world. We see this signature everywhere in current detection discourse. Faculty meetings spend hours debating whether a particular essay “feels” AI-generated. Departments draft AI policies, revise them, redraft them after the next model release, redraft again. Honor councils hold hearings in which the central evidence is a probability score from a black-box classifier whose vendor’s own terms of service disclaim its reliability for high-stakes decisions. The hearings continue anyway, because to stop holding them would be to concede that the authorship contract underneath them has lapsed.

This is also where the corpus reveals its sharpest contradiction. The same institutions producing prohibition policies through their academic integrity offices are simultaneously producing integration mandates through their teaching-and-learning centers, their career services, their employer-relations offices. Students are told, in the same week, that using ChatGPT on an essay is grounds for failure and that fluency with ChatGPT is a baseline employability skill. The policy left hand and the curricular right hand are not coordinating because they cannot coordinate: they are operating from incompatible ontologies of authorship. The prohibition side is defending the Second Wave contract. The integration side has already conceded the Third Wave production reality. The student is left to navigate the contradiction, often with worse information than the institution has, and is then disciplined for navigating it incorrectly.

Future shock at scale, institutionalized, looks exactly like this.

The Prosumer the Detector Cannot See

The concept that finally breaks the detection regime open is Toffler’s prosumer, introduced in The Third Wave and elaborated in (Revolutionary Wealth). The prosumer is the actor who blurs the producer-consumer line: the homeowner who installs their own solar panels, the patient who self-monitors with wearables, the open-source contributor whose hobby is also infrastructure. Toffler saw prosumption as a structural feature of the Third Wave economy, not a niche behavior — value increasingly created in the seam between traditional production and traditional consumption.

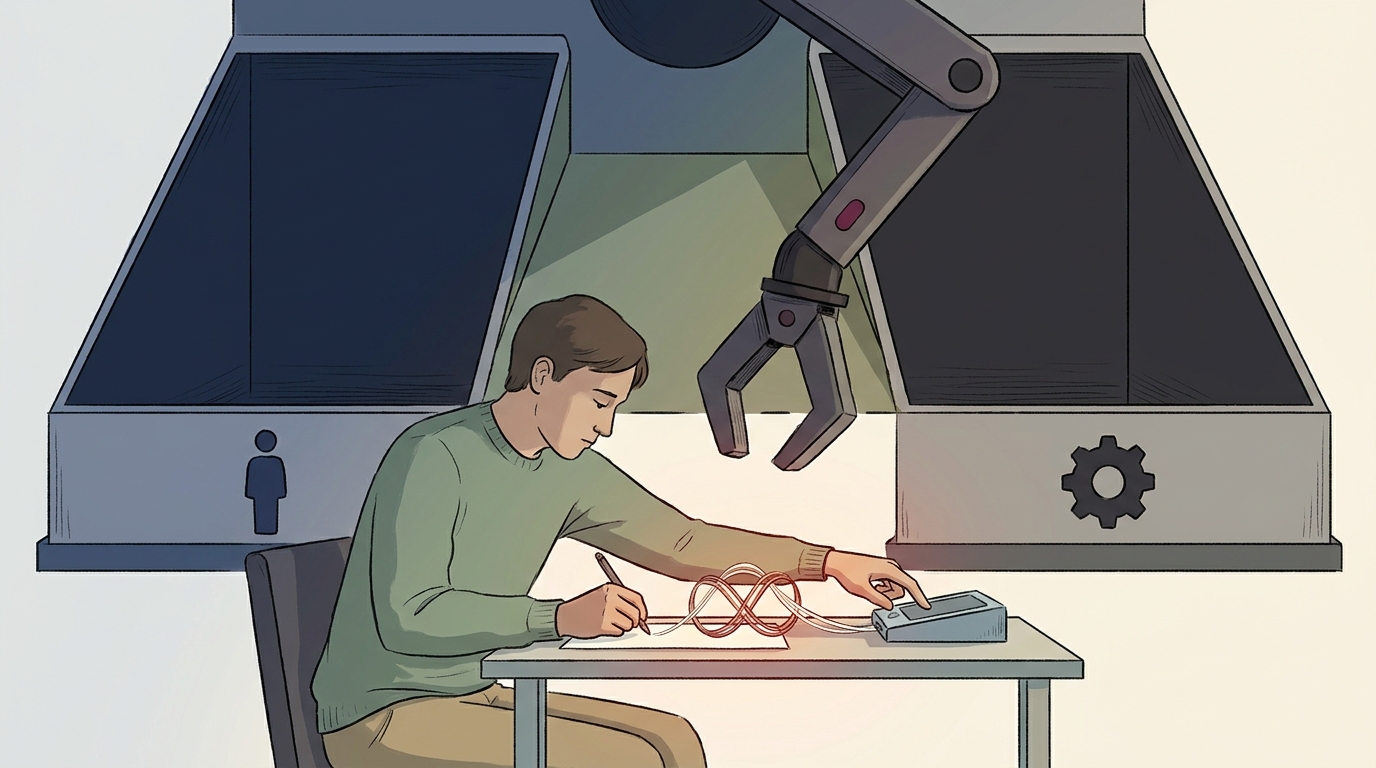

The writer working with a language model is a prosumer in exactly Toffler’s sense. They are not a pure consumer of a writing tool — autocomplete and spell-check were that. They are not a pure producer of original text — the model contributes phrasings, structures, even arguments. They are operating in the seam, iterating with the model, accepting some suggestions, rejecting others, prompting and re-prompting, editing the output, feeding it back. The artifact that emerges is neither human-authored in the Second Wave sense nor machine-generated in the science-fiction sense. It is prosumed.

The detection regime is structurally incapable of seeing prosumers. Its entire output space is binary: human or machine, authentic or inauthentic, original or generated. It has no slot for the seam. When prosumed text is fed to a detector, the detector returns one of the two available labels, and the label is then treated by the institution as a fact about authorship. It is not a fact about authorship. It is a forced choice imposed on a phenomenon that does not have two values.

This matters because prosumption is not going away. Every revision of a serious knowledge worker’s writing now happens in proximity to models. Lawyers draft with them. Programmers write with them. Journalists, marketers, researchers, doctors composing patient communications — the seam is becoming the default site of professional text production. The student who writes with a model is not engaged in deviance from the future of writing. They are engaged in rehearsal of the future of writing, often with less guidance than they need, and the institution’s detection apparatus is treating the rehearsal as the violation.

To say this plainly: the detection regime is punishing students for participating in a literacy practice that the labor market the institution claims to prepare them for has already adopted. This is not a tension to be managed. It is a contradiction at the level of what the institution thinks it is for.

The Vendors and the Vocabulary

A column committed to skepticism of power has to name the vendors. The detection-side market — Turnitin (owned by Advance Publications, formerly by private equity), GPTZero, Originality.ai, Copyleaks, Winston AI, Sapling, and a long tail of smaller entrants — is not a public-interest infrastructure. It is a subscription business whose addressable market is institutional anxiety. The more anxious the institution, the larger the contract. There is no version of this market in which the vendors say: actually, our product cannot reliably do what you are buying it for. The version that exists is the one where accuracy claims migrate into marketing copy and the disclaimers migrate into terms of service no procurement officer reads.

The other side of the market is structurally identical. “Humanizer” tools — Undetectable.ai, StealthGPT, WriteHuman, Phrasly, and dozens more — sell the inverse subscription. Their addressable market is student anxiety produced by the detection-side vendors’ marketing. The two sides are co-dependent; neither would have a business in the absence of the other. Some venture capital portfolios contain companies on both sides. The arms race is not a misfortune that has befallen the market. The arms race is the market.

The vocabulary deserves the same scrutiny. “AI detector” implies an instrument that detects a property of the text. What these tools actually compute is a probability score derived from a classifier trained on a labeled corpus. The score is not a measurement; it is an estimate, and the estimate’s reliability depends entirely on the resemblance of the input to the training distribution. “Humanizer” implies a tool that makes text human. What these tools actually do is perturb surface features — sentence length variance, lexical diversity, punctuation patterns — to push the input below the classifier’s threshold. They do not humanize anything. They evade a specific classifier. Strip the marketing and the mechanics are mundane

← Back to AI News Social