Mcluhan column

Through McLuhan’s Lens

The Detection Arms Race

April 26, 2026 | 2667 words

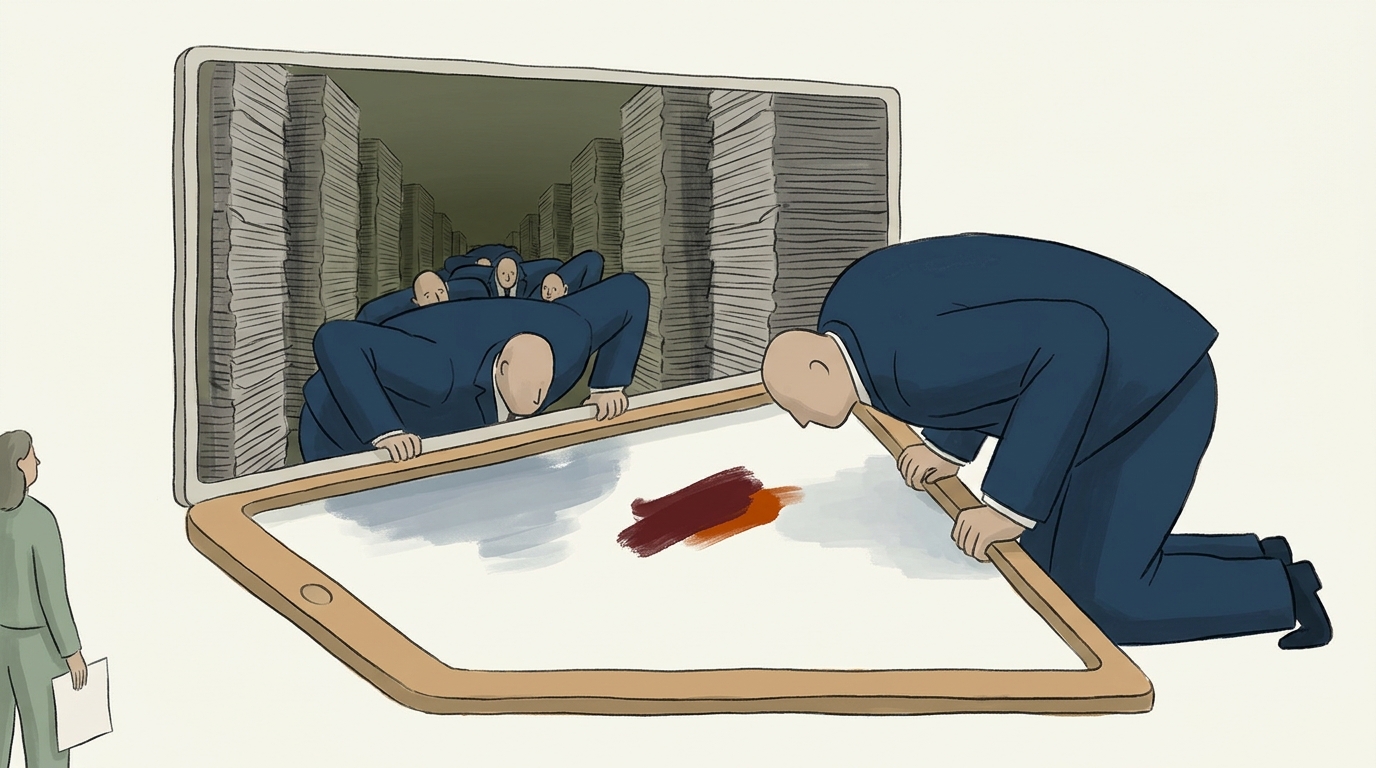

Through McLuhan’s Lens: The Arms Race That Is Its Own Product

In October 2024, a student at the University of Minnesota posted screenshots of an exchange that briefly captured a small corner of the internet. She had submitted a piece of writing she had composed by hand, on paper, while her roommate watched. A detection tool flagged it as 79% AI-generated. She appealed. The appeal process required her to run her draft through a different detector to demonstrate her innocence. The second tool returned 12%. The first tool, asked to re-evaluate, now returned 64%. Nothing about the document had changed. The institution treated the discrepancy as a procedural matter to be resolved by adding a third tool to the workflow.

This is the texture of the detection arms race in its mature form: not a contest between truth and deception, but a recursive loop in which probabilistic verdicts are appealed to other probabilistic verdicts, and each new tool added to the stack is sold as the answer to the noise generated by the last. The vendors of detection software have raised, by some industry tallies, more than $400 million in the past two years. The vendors of generation software have raised orders of magnitude more. Both sets of vendors sell to the same institutions. In many cases, both sets of vendors are owned by the same investors. The customer pays twice — once to introduce the problem, once to manage it — and the ground beneath the customer’s feet keeps shifting because the tools on both sides update weekly.

A reader watching this from the outside might assume the question worth asking is: who is winning? That is the question the trade press asks. It is the wrong question, and the wrongness of it is the subject of this column.

The Figure and the Ground

Marshall McLuhan’s most useful and most resisted move was to pull attention away from the content of a medium and toward the medium itself — what he called, in Understanding Media, the distinction between the figure (what we are looking at) and the ground (the environment that shapes what looking at it does to us). The figure in the detection arms race is the cat-and-mouse game: better generators produce text that defeats current detectors; better detectors are trained on the latest generators; the cycle iterates. Trade publications cover the figure obsessively. Procurement officers benchmark the figure. Universities, law firms, publishers, hiring platforms, and government agencies allocate budgets according to the figure’s quarterly state.

The ground is something else entirely. The ground is what happens to the relationship between a writer and a reader when a probabilistic classifier is permanently installed between them. The ground is what happens to institutional trust when authorship becomes a number. The ground is what happens to the concept of “writing” itself when the artifact must be defensible against a tool whose criteria are proprietary, unstable, and not legible to the writer being judged.

McLuhan’s central claim — that the medium is the message — was not a slogan but an analytic instruction. It said: stop arguing about whether a particular piece of content on television is good or bad; the consequential thing is what television, as a sensory environment, is doing to a population that spends six hours a day inside it. Applied to the present case, the instruction is austere. Stop arguing about whether GPTZero or Turnitin or Originality.ai catches the right percentage of AI text. The consequential thing is what the permanent installation of detection-as-mediation does to writers, readers, and the institutions that house them.

The content of detection is catching cheaters. The message of detection is that authorship is now a forensic question, that every piece of writing exists under suspicion until cleared, that the reader’s first relationship with a text is no longer interpretation but verification. That message lands regardless of whether any individual detector works. It lands especially well when detectors don’t work, because malfunctioning detectors generate more procedure, more appeals, more meetings, more vendor calls — more of the surveillance environment, not less.

The Frame That Makes the War Thinkable

To see how completely this ground has been naturalized, consider how the arms race is described in the discourse that surrounds it. A recent analysis of 6,636 articles on AI in institutional settings found that the dominant frames were tool and threat. The partner frame — AI as a collaborator, as something one writes with rather than against — appeared in fewer than 5% of articles. This is not a neutral distribution. It is a precondition.

The arms race is unthinkable inside a partner frame. If a writer is composing alongside a generative tool the way a musician composes alongside a sampler or a photographer alongside a darkroom, the question “did a human write this?” becomes incoherent — not because the answer is hard but because the question has the wrong shape. The arms race requires the threat frame to be intelligible. It requires that AI text and human text be ontologically distinct categories, that one be authentic and the other counterfeit, that institutional energy properly flows toward maintaining the boundary between them.

The 95%+ dominance of tool/threat framing in the discourse is therefore not a description of how people feel about AI. It is the substrate that makes a $400 million detection industry economically possible. Detection vendors do not need to convince customers that their products work. They need only to keep the threat frame oxygenated. The frame sells the product; the product reinforces the frame; the frame sells the next product. McLuhan, in The Medium Is the Massage, described how a medium “works us over completely” — how it rearranges the sensory ratios of those who live inside it without their consent or awareness. The threat frame has worked over the discourse. The arms race is what the worked-over discourse looks like when it tries to act.

The Rear-View Mirror

McLuhan observed that human beings tend to “march backwards into the future” — to perceive new environments through the categories of the old one. He called this the rear-view mirror. The first automobiles were called horseless carriages. The first television programs were filmed stage plays. Each new medium is initially understood as a defective version of its predecessor, and that misunderstanding shapes the early architecture of how the medium gets used and governed.

Detection tools are a rear-view mirror technology in a particularly acute sense. They are built on the premise that “human writing” is a stable, recognizable artifact with consistent stylistic fingerprints — burstiness, perplexity, lexical idiosyncrasy — that distinguish it from machine writing. This premise was approximately true in 2021. It was already strained by 2023. By 2025, it describes a world that no longer exists. The writers being scanned by detectors have, in many cases, spent two years drafting alongside generative tools, absorbing their cadences, smoothing the burstiness out of their own prose because the tools made smooth prose easier to produce. The “human” baseline against which detectors measure has been continuously contaminated by the very tools detection was built to identify.

Detection drives forward by looking backward — toward a pre-2022 textual ecology that the rest of the world has already left. This is why a hand-written essay can score 79% AI-generated. The detector is not malfunctioning. It is correctly identifying that the student’s prose has the statistical properties of contemporary writing, which is to say, of writing produced inside an ecology saturated by generative models. The detector is, in a strict sense, telling the truth: this writing belongs to the post-2022 world. It just cannot say what that means, so it reports a percentage and lets the institution mistake the percentage for a verdict.

A reader-serving observation here: when a detector returns “87% AI-generated,” it is not estimating the probability that AI wrote the text. It is estimating the degree to which the text resembles the statistical profile of AI-trained text in the detector’s training set, which is itself a moving and contested artifact. The number is not a measurement of authorship. It is a measurement of stylistic distance from a baseline that the detector’s vendor has chosen and will not disclose. Procurement language calls this “AI detection.” It is more accurately described as proprietary stylometry sold as forensics.

Extension and Amputation

Among the most uncomfortable of McLuhan’s claims, developed across Understanding Media, was that every technological extension of a human capacity entails a corresponding amputation — a numbing of the faculty being extended. The wheel extends the foot and amputates the leg’s power. The book extends memory and amputates oral recall. The clock extends the perception of duration and amputates the body’s relationship to circadian time. The amputation is not a side effect; it is the price of the extension, and McLuhan’s particular hostility was reserved for societies that took the extension without acknowledging what they had given up.

What is being amputated when authorship verification is outsourced to a probabilistic classifier?

The first amputation is editorial judgment. Before detection tools, a reader who suspected that a piece of writing was not the writer’s own work had to articulate why — to point to a shift in voice, a vocabulary the writer had not previously used, an argument the writer could not defend in conversation. That articulation was a skill, and it was distributed across editors, teachers, hiring managers, peer reviewers. The skill required engagement with the text and with the writer. Detection tools extend this skill — they make verification faster, scalable, “objective” — and amputate it. A generation of editors and instructors is now being trained to outsource the judgment to the tool first and engage with the text only if the tool flags it. The faculty atrophies. The faculty was the relationship.

The second amputation is the writer’s authority over their own work. A writer who is told by a tool that their writing is 87% AI-generated has no way to contest the verdict on its own terms, because the terms are not disclosed. The writer can only produce more writing — handwritten, screen-recorded, version-controlled — to be fed into the same or other tools, hoping the next verdict is more favorable. The writer has been amputated from the position of being the authoritative source on their own authorship. That position is now occupied by a vendor.

The third amputation, and the most consequential, is institutional patience. Institutions that adopt detection tools are extending their capacity to process large volumes of writing under suspicion. They are amputating their capacity to operate without that suspicion. Once the tool is installed, the workflow assumes the tool. The procedure assumes the tool. The appeal process assumes the tool. Removing it becomes administratively expensive, and so the tool stays even when its outputs are known to be unreliable, even when the costs of false positives are documented, even when the writers being judged include the institution’s own staff. The institution has extended itself into a permanent posture of forensic doubt and amputated its memory of operating any other way.

The Hot Score and the Cool Conversation

McLuhan’s distinction between hot and cool media, developed in Understanding Media, was that hot media are high-definition and low-participation: they fill in the detail and leave little for the audience to complete. Cool media are low-definition and high-participation: they invite the audience to contribute, interpret, fill in. A photograph is hot; a cartoon is cool. A lecture is hot; a seminar is cool.

A detector’s output — “87% AI-generated” — is one of the hottest media artifacts ever deployed in institutional decision-making. It is a number with two significant figures, presented with no error bars, no methodology, no context, and no invitation to interpretation. It forecloses the conversation it ought to open. The institution that receives the number does not deliberate; it acts. The writer who receives the number does not discuss; they appeal. The temperature of the artifact is so high that it burns away the cool, slow, participatory work of actually evaluating a piece of writing in conversation with its author.

This is one reason institutions adopt detection tools even when they suspect the tools are unreliable. The hot output is administratively useful. It permits decisions to be made quickly, by people who do not have time to read carefully, in volumes that do not permit careful reading. The score is not really a finding; it is a permission slip — a way of converting the slow, contested, irreducibly human work of judging writing into something that can be scaled across a workflow. The vendors are not selling accuracy. They are selling the ability to act decisively on writing one has not read.

The Mirror, the Number, and the Narcosis

There is a striking imbalance in the conceptual vocabulary of contemporary AI discourse. In the same corpus of 6,636 articles, governance appears 112 times. Transparency appears 17 times. The ratio is 6.6 to 1. Governance saturates the conversation; transparency is marginal.

This is not a quirk. Detection is a governance technology. It allows an institution to do something about AI without having to understand anything about AI — without having to disclose what tools are sanctioned, what uses are legitimate, how decisions about authorship will actually be made, what recourse a writer has, what data the detector was trained on, what it was trained against. Governance language is procedural; transparency language is substantive. The arms race runs entirely on procedural language. It would collapse under transparency requirements, because no detection vendor can disclose its training data without enabling the next generation of evasion, and no generation vendor can disclose its training data without inviting litigation. The two industries are bound together in a shared interest in opacity. Governance flourishes in that opacity. Transparency starves.

McLuhan, drawing on the Narcissus myth in Understanding Media, warned that humans tend to fall in love with their technological extensions, mistaking them for parts of themselves rather than as numbing prosthetics. He called this Narcissus narcosis: the trance in which the user becomes a “servomechanism” of the tool, adapting to it rather than questioning it. The institution that stares into the detector’s output and sees an objective verdict is in this trance. The number on the screen is a reflection of the institution’s own anxieties — about scale, about trust, about its inability to read carefully at the volumes it now operates in — rendered as a percentage and mistaken for diagnosis. The institution is not learning anything about the writer. It is looking at itself and calling the reflection truth.

The discourse gaps confirm this. The voices that are missing from the arms-race conversation are precisely the voices that would break the trance: the writers and users being scanned, who could describe what the experience does to their relationship with their own writing; researchers documenting the long-term cognitive effects of composing under permanent surveillance; non-Western perspectives that might frame authorship and authenticity in ways that do not assume the proprietary individual author of post-Romantic Anglo-American convention. None of these voices appear at scale in the discourse. The conversation is conducted entirely between vendors, procurement officers, and the trade press that covers them. The reflection is uninterrupted.

The Arms Race as Its Own Product

This brings us to where the column has been heading. The detection arms race is typically described as a problem in search of resolution: better tools, clearer policies, eventual equilibrium. The framing assumes that the energy spent on the war is incidental to the war’s purpose, that the goal is some future state in which detection has either succeeded or been abandoned and the institutions involved can return to ordinary operation.

This framing is wrong. The energy is the product.

The arms race produces a continuous stream of vendor revenue, procurement cycles, policy revisions, appeal procedures, training sessions, compliance dashboards, and trade-press coverage. It produces an entire economy of mediation between writers and readers — an economy that did

← Back to AI News Social