Sovereignty by Subscription

The Longer View

Sovereignty by Subscription

I. The question of the week

In December 2024, a piece in The National used the phrase “techno-colonialism” to describe what AI bias was doing to the Global South. By August 2025, a Trinidadian writer in The Guardian was warning that the digital age would perpetuate the inequalities of the colonial age. Between those two moments, eight African countries published national AI strategies, the UAE signed a partnership with Rwanda and Malaysia, Google launched three governmental initiatives in Latin America, and the World Bank started talking about “local realities.” The rhetoric of sovereignty traveled a great distance in nine months. The infrastructure did not.

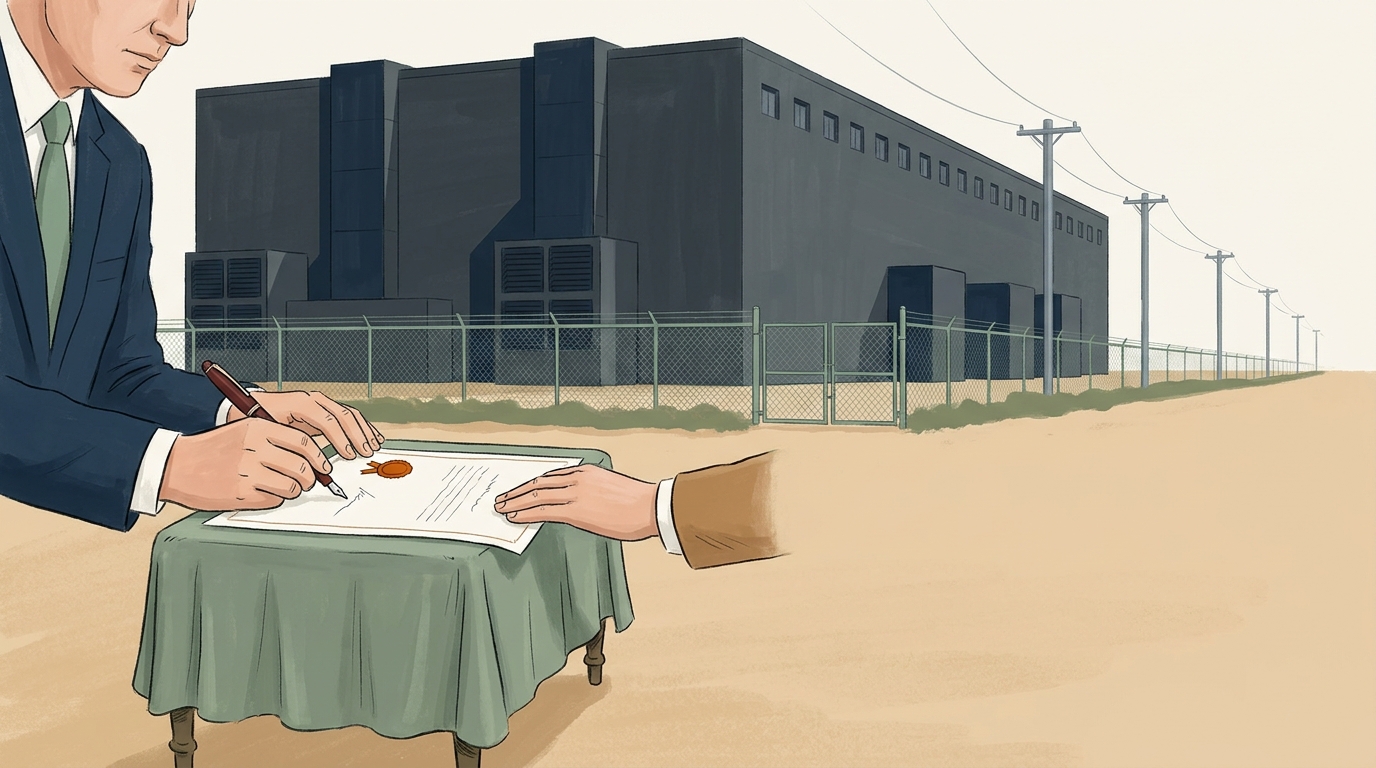

This week the column takes the arc of “AI sovereignty” — what the Global South has been saying about its own AI future, and what has actually been built or imported in its name. The tension that surfaces is not the familiar one between technology and tradition, nor between adoption and refusal. It is between the documents being signed and the data centers doing the work. A national strategy is a piece of paper. A model trained on someone else’s corpus, hosted on someone else’s cloud, governed by someone else’s terms of service, is a fact on the ground. The question is whether the optimistic vocabulary that has come to dominate the conversation — leadership, partnership, leapfrogging, framework — has been describing a movement toward sovereignty or papering over its absence.

The arc holds zero inversion points across four quarters. The framing stays positive throughout. That is itself a finding worth examining: when a discourse refuses to admit a setback, the analyst has to ask whether the absence of contradiction reflects an absence of problems or an absence of permission to name them. The argument that follows is that it is the second, and that the optimism has come at a price.

II. What we’ve been saying

The earliest sustained framing came in late 2024 from journalists and policy analysts who were still willing to use the word colonialism. How AI bias is impacting the Global South in new wave of ‘techno-colonialism’, published in The National, made the diagnosis directly: training data dominated by Western corpora was producing systems that misread, mislabeled, and mispriced the rest of the world. Within the same month, South Africa: Artificial Intelligence Regulation in South Africa - Prioritising Human Security reframed the regulatory conversation around human security — a phrase borrowed from the post–Cold War development vocabulary, deployed here to argue that the EU’s AI Act and the G20 agenda were leaving African priorities on the cutting-room floor.

The first quarter of 2025 saw the rhetoric shift register. The diagnostic word colonialism largely receded. In its place came the architectural words: framework, blueprint, ethics. AI Literacy Framework for the Global South: From Margins to Momentum cast the South as moving from spectator to participant. AI-Driven Progress: How Indonesia and India are Redefining the Global South’s Digital Future elevated two specific countries to redefiners of a category they had previously been the objects of. The World Economic Forum’s Blueprint for Intelligent Economies - AI Competitiveness through Regional Collaboration 2025 generalized the move: regional collaboration, not national capacity, became the unit of analysis. A discussion paper from the Research and Information System for Developing Countries, AI Ethics for the Global South: Perspectives, Practicalities, and India’s role, pushed India forward as the natural ethical broker between North and South.

By the second quarter the optimism had organized itself into a small set of recurring claims. Latin America’s regulatory tradition — its constitutional rights culture, its absence of legacy tech monopolies — was now an asset rather than a deficit. AI regulation offers development opportunity for Latin America made the argument; Smart AI regulation strategies for Latin American policymakers elaborated it from Brookings. Ghana and Rwanda were elevated to case studies — (AI policies in Africa: lessons from Ghana and Rwanda) — small countries with crisp documents that other governments could imitate. The World Bank blog post AI synergy: Letting local realities guide global AI innovation toward impact coined a formulation that quickly traveled: the innovation is global, the impact is local, and the connective tissue is “synergy.” The verb “leverage” began to displace the verb “build.”

The third quarter consolidated the optimism into the language of human development. The UNDP’s regional director, writing in Generative Human Development: AI As a Supercharger of Progress In The Asia Pacific, framed AI as a supercharger — a word that does some work, since it concedes the existing engine is foreign and casts the South’s contribution as fuel injection. A peer-reviewed conference paper, Determinants of Ethical and Scalable AI for the Sustainable Development Goals: A Qualitative Framework from the Global South, translated the hopes into the technocratic register of SDG implementation. Are we ready to meet the expectations of AI for development?, reporting on a global survey conducted for the 2025 Human Development Report, found that roughly one in five respondents was already using AI tools — a number deployed as evidence of readiness rather than evidence of dependency.

A counter-current persisted, but quietly. What will the AI revolution mean for the global south? returned to the colonial frame in The Guardian, written by a commentator from Trinidad and Tobago who refused to drop the historical analogy. Controversial AI Policies in Africa: Navigating Exploitation, Surveillance, and the Digital Divide noted in September that the eight African countries with national AI strategies were drafting them inside an active “exploitation, surveillance” environment that the strategies themselves did not constrain. There are challenges and opportunities for Africa in the AI revolution, at the LSE Africa blog, was more diagnostic than its title — “unchecked, AI could reinforce structural inequalities” — but its warnings sat as caveats inside a larger story of opportunity.

Over four quarters, then, a vocabulary settled. Sovereignty became leadership. Dependency became partnership. Extraction became synergy. Colonialism became digital divide. Each substitution was defensible in isolation; together they constituted a managed retreat from the conceptual ground that the late-2024 reporting had briefly held.

III. What’s been happening

The infrastructure tells a different story than the framework documents. Google’s Partnering with Latin American governments on 3 new AI initiatives cited the company’s own Ipsos survey to report that 69% of Mexicans, 61% of Brazilians, and 58% of Argentines were “excited” about AI, and used those numbers to introduce direct partnerships between the firm and national governments. Not with regional regulators. Not with public universities. Not with the constitutional rights traditions that the Brookings piece had just praised. The partnership was rhetorical at the level of “collaboration” and infrastructural at the level of cloud contracts.

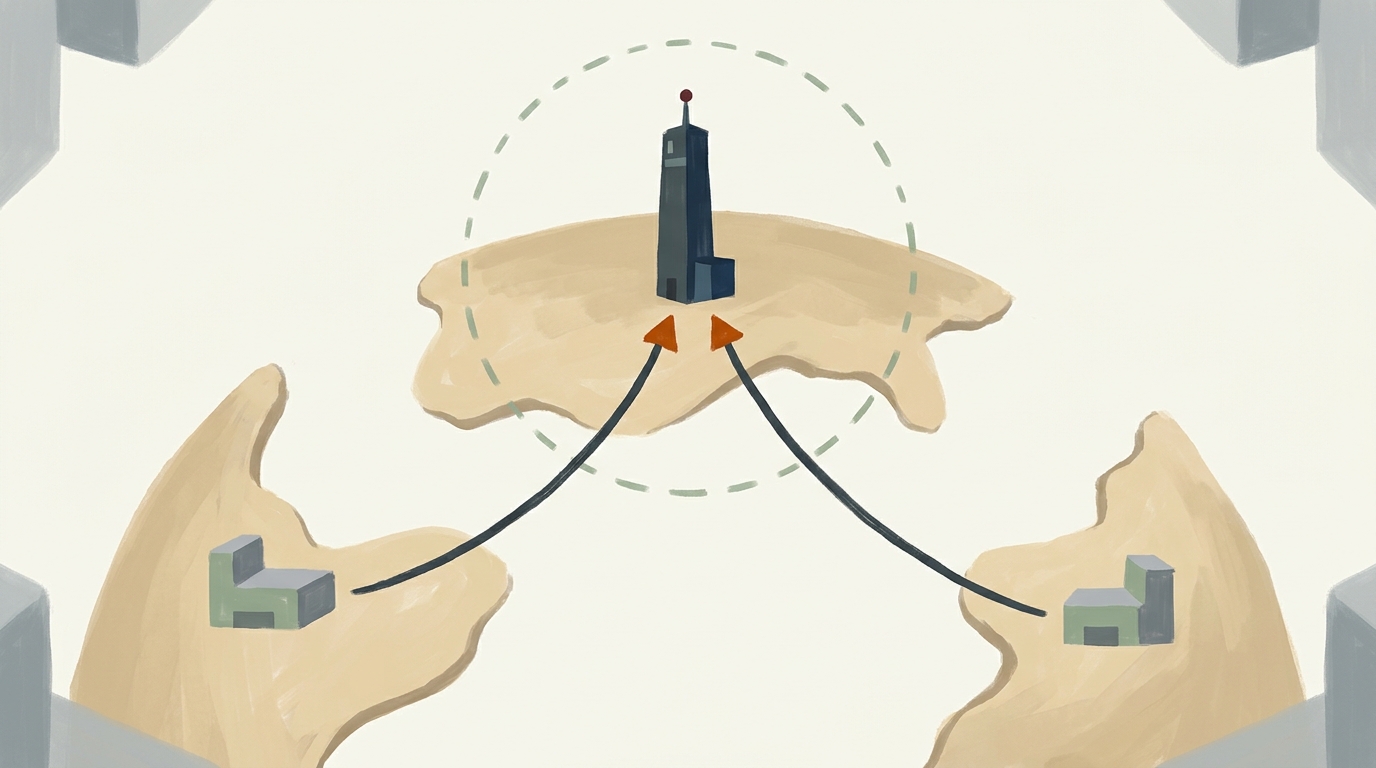

In August, UAE-led AI pact aims to narrow digital divide in Global South reported on a partnership between the UAE, Malaysia, and Rwanda — three states with active sovereign-wealth or state-led investment vehicles — for “ethical AI use and knowledge sharing.” The pact’s structure put a Gulf state in the position of broker between two of its smaller partners, mirroring at a regional scale the same hub-and-spoke geometry that Northern firms have long used with Southern markets. South–South cooperation in form; capital concentration in substance.

The educational layer is the clearest case. Digital Technology and Artificial Intelligence : a revolution for African higher education?, published in late September 2025, surveyed African universities adopting personalized learning pathways, assessment diagnostics, and distance-learning platforms. The systems doing that work — the LMS integrations, the proctoring tools, the writing assistants — are without exception products of firms headquartered outside the continent. The pedagogical sovereignty being claimed in faculty senates runs on infrastructure leased from Redmond, Mountain View, and Beijing.

The Atlas of AI - Power, Politics, and the Planetary Costs names this geometry plainly. AI, Crawford writes, is “a manifestation of highly organized capital backed by vast systems of extraction and logistics, with supply chains that wrap around the entire planet.” The phrase “supply chains that wrap around the entire planet” is the part the development blogs leave out. A Rwandan ministry signing a partnership memorandum in Kigali is not stepping outside that supply chain; it is signing onto a node of it. The same volume names “the growing commercial surveillance sector, which aggressively markets its tools and platforms to police departments and public agencies” — a sector whose customer base is increasingly global. The September 2025 piece on African policy lists surveillance among the active risks the new strategies do not address.

The fiscal picture has shifted, but not in the direction the rhetoric implies. Ask not what your government can do for you, but what AI can do for your government, published in the Mail & Guardian, surveyed the ways AI was being procured for South African public administration and found the procurement architecture pulling capacity outward — services contracted to international vendors rather than built in-country. The optimistic case for “AI for government” turned out, on inspection, to be a case for AI vendors selling to government.

What of the documents themselves? The eight African national strategies catalogued in the September piece are real, and they vary. Rwanda’s is more detailed than most; Ghana’s foregrounds skills and SMEs; South Africa’s, as the December 2024 piece noted, is anchored in human security. The Conversation’s AI policies in Africa: lessons from Ghana and Rwanda treated the documents seriously and found them aspirational — long on principles, short on enforcement, near-silent on the question of who owns the compute. Latin American regulation has progressed further on paper, but the Smart AI regulation strategies recommendations make clear that the region’s main strategic asset, in the analysts’ eyes, is its willingness to align with EU-style frameworks — to import a regulatory architecture rather than construct one.

The question of language deserves its own paragraph. Several development pieces, including the World Bank’s AI synergy, gesture at the importance of low-resource languages and locally relevant corpora. The work of building those corpora is genuinely under way in places — Masakhane for African languages, AI4Bharat for Indian languages, smaller efforts in Latin America — but the article evidence assembled here does not name them, and the procurement decisions documented in it do not appear to be routing public money toward them. The frameworks talk about local realities; the contracts buy global platforms.

The picture, then, is this. Strategy documents proliferate. Partnerships are signed. Surveys report excitement. The actual capital — chips, data centers, foundation models, surveillance platforms — remains overwhelmingly outside the jurisdictions whose sovereignty is being celebrated. As an earlier essay in this publication on AI and social aspects observed in our briefing of 2025-08-05, the rhetorical democratization of AI has consistently outpaced its material redistribution. The Global South arc is the same pattern at planetary scale.

IV. Where they meet, where they miss

The rhetoric and the reality meet most fully at the level of policy ambition. Governments in Lagos, Kigali, São Paulo, Jakarta, and New Delhi do want a different relationship with AI than the one their citizens currently have. The strategies are not cynical. The phrase “human security” in the South African document, the priority on local-language data in the Indian discussion paper, the rights-first instinct in Latin America’s regulatory tradition — these are not marketing. They are commitments, made in good faith by people who often understand the stakes more clearly than the foreign vendors who eventually sit across the table from them.

Where they miss is at the layer below policy: where compute lives, who owns the model weights, which jurisdiction’s courts can subpoena the logs. The vocabulary of sovereignty has become detachable from these questions. A country can declare an AI sovereignty agenda, sign a partnership with a foreign cloud provider to host its citizen-services chatbot, and treat the two acts as compatible. They are compatible only if sovereignty has been redefined as procurement preference — the right to choose which foreign vendor, on which terms, for which use case. That is not what the word has historically meant.

AI Ethics - The MIT Press Essential Knowledge series makes a related point about explanation. The GDPR, the volume notes, “provides a right to be informed about automated decision making but does not seem to demand an explanation about the rationale for any individual decision.” The structure is recognizable in the Global South sovereignty documents: a right to be informed about AI, without a corresponding right to know how, on whose hardware, by whose model, with what training data, the decisions are being made. The framework is enacted; the substance evades.

There is a second miss, harder to name. The optimism that has dominated the four-quarter arc — positive framings running ahead of critical ones in every quarter — is not only a rhetorical choice. It is a political settlement. Critical framings of AI sovereignty in the South do not get airtime in the same outlets that publish the optimistic ones, in part because the development institutions have a lane to occupy and that lane requires forward motion. The result is a public conversation in which the strongest critique — that this is techno-colonialism with new manners — appears mainly in op-eds by writers from the Caribbean and in one-off pieces in African policy outlets, while the architecture of “partnership” is described in white papers by the institutions doing the partnering.

The library voice that makes this most concrete is Crawford again. The Atlas of AI insists that what gets called artificial intelligence is “a complex set of expectations, ideologies, desires, and fears” mapped onto a two-word phrase. The Global South sovereignty discourse loads that phrase with a particular set of expectations — leapfrogging, development, justice — and the loading itself becomes a way of not seeing the supply chain underneath. UNESCO’s Think Critically Click Wisely module asks readers to bring critical media-literacy habits to AI; the same habits applied to the policy literature would notice how often the verb “harness” appears next to the noun “potential,” and how rarely the verb “build” appears next to the nouns “fab” or “data center.”

A real sovereignty agenda would distinguish between AI used in the Global South and AI from the Global South. The article evidence suggests the second category is still small enough that almost every claim about the first elides the difference.

V. The longer view

The four-quarter arc records a vocabulary catching up to a reality, and a reality outrunning the vocabulary. The Global South now has a recognizable AI sovereignty discourse — language, institutions, conferences, white papers, ministerial portfolios. It does not yet have, in most places, sovereign compute, sovereign models, sovereign data flows, or sovereign jurisdiction over the systems being deployed inside its borders. The first achievement is real and was harder to win than it looks. It is also, at present, doing the work of standing in for the second.

The honest reading of the optimism is not that it is wrong but that it is premature. The pieces from late 2024 that used the word techno-colonialism were not making a category error; they were making a temporal one — describing as the present a structure that was about to become the present everywhere, including in the very documents now declaring its supersession. To read the September 2025 catalog of African strategies as a victory is to mistake the existence of a national plan for the possession of a national capacity. The plans matter. They are not the capacity.

A sovereignty signed on someone else’s cloud is a lease.

References

- Controversial AI Policies in Africa: Navigating Exploitation, Surveillance, and the Digital Divide

- Partnering with Latin American governments on 3 new AI initiatives

- How AI bias is impacting the Global South in new wave of ‘techno-colonialism’

- South Africa: Artificial Intelligence Regulation in South Africa - Prioritising Human Security

- AI Literacy Framework for the Global South: From Margins to Momentum

- AI-Driven Progress: How Indonesia and India are Redefining the Global South’s Digital Future

- Blueprint for Intelligent Economies - AI Competitiveness through Regional Collaboration 2025

- There are challenges and opportunities for Africa in the AI revolution

- AI Ethics for the Global South: Perspectives, Practicalities, and India’s role

- Ask not what your government can do for you, but what AI can do for your government

- AI regulation offers development opportunity for Latin America

- AI policies in Africa: lessons from Ghana and Rwanda

- AI synergy: Letting local realities guide global AI innovation toward impact

- Smart AI regulation strategies for Latin American policymakers

- Generative Human Development: AI As a Supercharger of Progress In The Asia Pacific

- Determinants of Ethical and Scalable AI for the Sustainable Development Goals: A Qualitative Framework from the Global South

- What will the AI revolution mean for the global south?

- UAE-led AI pact aims to narrow digital divide in Global South

- Are we ready to meet the expectations of AI for development?

- Digital Technology and Artificial Intelligence : a revolution for African higher education?

- The Atlas of AI - Power, Politics, and the Planetary Costs

- AI Ethics - The MIT Press Essential Knowledge series

- UNESCO Think Critically Click Wisely